At AppMap, I work every day with companies both large and small who want to build and ship code more efficiently. We do that by helping developers onboard to new code faster, debug more efficiently, and avoid shipping with architecture flaws.

Developing efficiently gets harder as code bases grow larger. On small code bases, concepts like modularity, boundaries, internal APIs, and clear areas of responsibility can seem like abstract or academic concerns. Compared to the immediate challenge of fixing the bug or finishing the feature for this week’s sprint, concerns about architectural cleanliness can feel unimportant.

Testability reflects modularity

So, it’s been interesting for us to work with developers who are maintaining monolithic Ruby and Java applications which are as large as 1 million lines of code. At this scale, clean architecture and modularity are critical, not academic concerns. The most compelling reason for this that I have seen so far is testability.

On a small codebase, all available automated tests are typically run on each change. As the codebase grows and the testing time starts to exceed an hour or so, developers become conscious about speeding up the test suite. Slow tests are optimized, and parallel testing is used to speed up the overall test time.

But as a codebase becomes really large, there’s just no way to run the entire test suite in one hour. The only way that testing remains practical is to run a subset of the entire suite, and that’s where modularity becomes critical. For each proposed change, it’s necessary to identify the region of affected code, or “blast radius”, of that change. When the code is internally modular and uses defined service boundaries, these boundaries can be used to identify the subsets of the test suite that need to be run.

Scaling like the big boys

This is the approach used by Google. Google’s build and test system, Blaze[1], is able to take a sophisticated approach to dependency resolution. Blaze requires service boundaries to be defined using a strongly typed interface definition language (such as protocol buffers), making it possible to compute the blast radius of most code changes. To make this static analysis as effective as possible, Google also prefers the use of typed languages.

When a large codebase does not have any meaningful internal boundaries, computing a subset of tests to run for a particular changeset is more difficult. Yet, it is possible to compute an approximate blast radius by collecting data about a wide variety of code execution paths, and comparing these code paths with the known set of modified classes or packages. Sometimes, due to lack of coherence in the codebase, this is the only feasible approach.

Real world examples

The open source e-commerce system Solidus[2] is a good project to look at for understanding some of the challenges of scaling and modularity. One immediate observation: Solidus does not use the standard Rails project organization. Rather, it’s split into 5 different Ruby libraries (“gems”): core, api, backend, frontend, sample. This segmentation alone is enough to drive a significant amount of modularity.

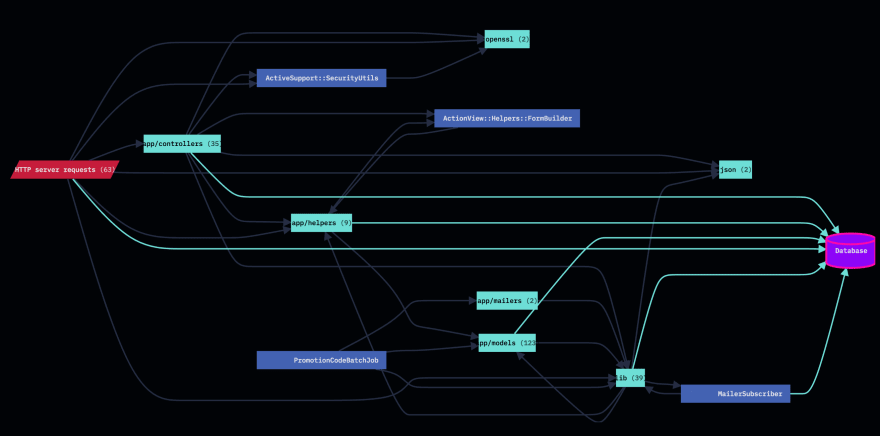

In order to present a good example of internal code organization, I found a large open source project which is similar to Solidus. Then I created this diagram[3] by analyzing over 5,000 test cases in its test suite.

One thing that stands out in this diagram is the database access occurring from many different parts of the code. One approach to improving the maintainability of this code might be to re-organize database access so that it occurs from a dedicated package. Routing all the database access through a dedicated and well-structured package can help to confine the “blast radius” of schema changes.

Recommendations

If you inherit a large codebase, you don’t have much control over the internal architecture. If it’s already devolved into a “ball of mud”, you have to work with it as it is. Attack the testing problem with a multi-faceted strategy.

- Begin to add internal modularity.

- Identify and speed up particularly slow tests.

- Collect data about code execution paths and use it to compute your best-guess blast radius for a given change.

If your codebase is medium-sized and growing, recognize the importance of modularity and start to head in that direction. You have some time to get there, but it’s useful to get started now. Some concrete things you can do:

- Measure the modularity of the code, and look for some obvious areas of deficiency.

- Set some modularity goals, and drive towards those goals over time. Having these goals will also help the business owners to understand how the time being spent on this effort is paying dividends. Good initial goals might be to centralize the database access in a single package, or to refactor domain logic out of the controllers and models and into dedicated packages.

- Educate stakeholders about a basic fact - the faster the tests can be run, the quicker and more frequently the product can be released.

Everyone understands, and loves, the value of speed!

Open source resources

- [1] Bazel, a build and test tool based on Google Blaze.

- [2] Solidus, an e-commerce system, written in Ruby on Rails.

- [3] AppMap, a tool to record, display, and analyze end-to-end code and data flows.

- Shopify/packwerk, a Ruby library for defining and enforcing modularity.

Originally posted on Dev.to